Here at Oasis Digital, we use Git for source control for nearly all of our projects. There are numerous different ways to use Git, and after many projects we have evolved on a set of effective practices. We have found the approach described here works very well for almost everything we do, though of course as a consulting organization we sometimes adjust things to meet a specific customer need.

Why Git?

Ubiquity

A decade ago, distributed source control arrived suddenly in the mainstream, after years as a niche segment. There were a number of contenders, but Git “won” by a large margin. Today Git is essentially the default choice, the powerful choice, the ubiquitous choice.

Incidentally, Git’s endless flexibility is a major reason it won the race. Various other competitors typically had better ergonomics, easier adoption, easier understanding… but less flexibility. Flexibility means that organizations large and small can use it in a way that meets specific needs.

Technical excellence

Unlike some other systems, we have never lost a line of code due to a defect in Git. Further, we have never needed a permutation which is fundamentally impossible with Git. We have found that, within the confines of our project sizes, it scales extremely well. (Though see a section at the end about limitations in this area.)

Multiplatform

Our projects are often developed across Linux, Windows, and Mac. Git works very well across all of them. Notably, it offers solutions (rather than a “head in the sand”) for differences around line endings among platforms. Yes, it is inconvenient to deal with line ending differences. Git has the tools to do it and get good results, without trying to pretend that one platform is another.

Distributed

Git can operate without a network connection, and more importantly, it operates locally at the speed of a local machine rather than at the speed of a potentially overwhelmed, faraway server. 90% of Git operations are completely local. This is an enormous benefit during daily use, though it has a downside – more risk of a developer forgetting to push code to a server. We handle that concern so well in other ways (project team discussions) that it has never caused difficulty.

Ecosystem

Due to ubiquity, Git has spawned a vast ecosystem of related tools. Nearly every editor and IDE understands Git. Nearly every build or continuous integration mechanism understands it. Nearly every code review tool understands it. There are numerous graphical Git tools for every platform, it is not dependent on any one vendor or team to continue producing quality results.

How we use Git

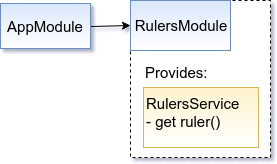

Use it as an expert

As with most other tools we use, we are committed to expert level mastery, not muddling through. Oasis Digital developers learn to understand the fundamental Git data model of a tree of commits each containing a tree of files. We learn the essential operations and common variations. We learn to understand what the commit tree looks like, what we wanted to look like, and to choose the right command to get from one state to the other. We are “source control nerds”.

We believe this makes sense because source control is not merely an ancillary tool; this is because change management is deeply fundamental to robust long-lived systems. Source control is not an inconvenience, it is an accelerator.

We to treat (portions of, read on) the Git commit tree as putty in our hands. This is an intentional trade-off, versus the notion of using a restricted subset of Git, but we believe for our use cases is the right trade-off.

Develop on branches

We develop on branches, not on master. In all but the smallest projects, branches go through review and discussion before landing on master.

We use many branches, large and small. We don’t pointlessly mix unrelated changes in the same branch. Git switches among and manages branches almost instantly, so the cost of using additional branches is nearly zero. We have found that the mental overhead of managing more than one branch using a tool, is much less than the mental overhead of juggling multiple unrelated changes in the same branch, a phenomenon we see regularly when developers use tools which make branching difficult or slow.

Branching model – varies

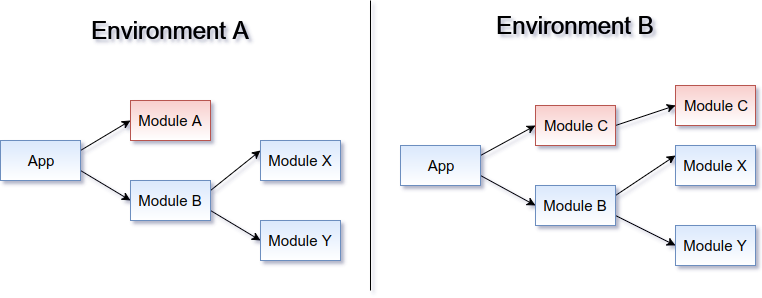

We manage those branches differently, depending on the needs of a project:

- small branches, directly off master

- shared branches, as needed

- release branches, to support old releases

- development branches

- trunk-based development, with small or large branches

Master always works

Master (and for more complex projects, certain other branches) always works. Code is reviewed and tested before going on master, not only after. If you read and adopt only one bit from this whole page, this is the most important. Review and commit and make the code good before it goes on master, don’t put junk on master and hope for a drive-by review-fix later.

Master strictly improves

Because we test and review and fix code before it goes on master, master (again, for complex projects, certain other branches) strictly improves. Each master commit adds at least one improvement or fixes at least one problem, without making the software worse. Of course we do not reach the standard perfectly every time, but this is the standard we aim for, and we reach it most of the time.

Immutable master; mutable development branches

Except in rare cases, once commits are on master we treat them as permanently immutable. They form a long-term record of the history of the project. They are a curated, comprehensible telling of the story of the development.

The work performed on branches, is iterated repeatedly on branches. Sometimes it is squashed and often it is rebased. Only when it is ready (to act as a strict improvement to master) does he get a final review, squash, rebase, merge. (As with everything else, except for certain special cases.)

Incidentally, this means that we very rarely have a merge commit on master. The long-term history of our development efforts are easy to read, as a mostly straight-line of medium-sized (not too large, not too numerous) commits.

Most of our developers on most of our projects are not even able to push to master – and are hardly affected by this restriction and daily work. Even with this process, a typical developer will have a commit land on master between one and a few times per week – Depending on numerous factors around how fine-grained the work is divided in the project.

Commit early, commit often, commit anything, push

The corollary of our high bar for work that goes on master, is an extremely low bar for work that can go on to a branch and be pushed. We rarely go to sleep with uncommitted on pushed code. When we need another developer to look at our work in progress, we commit it and push it as work in progress. When a new developer starts, they often commit and push code to a branch the first day. By keeping this bar low, we provide the maximum opportunity for new developers to become fluent with source control and to use our tooling as a communication mechanism (“here is some code, when you look at it for me?”) Early and often.

Squash out the mess

There is an inevitable, human tendency to be careful with anything that will be “part of your permanent record”. This is built into all of us, though it seems to be more pronounced in some cultures than others. But by combining our very low bar for what can be committed and pushed, with a policy of squashing minor steps together to yield an aggregated, high-quality change, we moderate this tendency very effectively. Code can be pushed any time for just a casual look. It won’t be part of the permanent history until it is good enough. No fear.

Left to its own, the tendency to be careful would force developers to “raise the bar” of what gets committed or pushed. A rational developer (regardless of policy) in such an environment must be careful about what they put in source control. We have found that this inevitably pushes developers to do more of their work without source control. For example, by shuffling files locally outside of the source control tool, by leaving work on pushed, etc.

Source control is a very powerful tool, and a low-bar-push-often-squash-rebase approach enables that power into the hands of every developer, individually as well as all developers working together.

But squash carefully

Still, squash and rebase are potentially troublesome features. So when we squash, we do not squash arbitrarily. We aim to squash groups of related changes, worked on along a path to achieve a specific feature, but not to squash unrelated work. One minor caveat though: to tell the story of the development in the most comprehendible way, the permanent master commits should be both of medium-size and medium number. Therefore we sometimes squash together a set of individually very small changes, when we can do so without creating difficulties.

Use a GUI

Many very skilled developers are fond of command line interfaces, for many good reasons. The Git command line interface, while slightly problematic in some ways, is extremely powerful for making changes. However, it is not particularly well-suited for understanding a Git commit tree, especially on a busy project with numerous concurrent branches. For this reason, when working heavily with Git we always use a graphical interface at least to visualize and understand the commit tree, even when we use the command line for manipulation. There are various high quality choices for GUIs on each platform, and on all platforms the built-in “gitk” interface is very helpful in a pinch to understand the commits – without any additional tool selection, download, or install.

Work together, learn, teach

We work together regularly. A group of developers working on the same project will set in a collaboration space together (inviting remote members online) at a giant screen attached to a fast development machine. We work on the hard problems together, we refer you tricky code together. We learn and teach together, how to use all of our tools, including Git.

Caveats, limitations, and context

Small, medium, and large, but not huge

Git, and the practices here are useful on our projects of small, medium, or largish size. Beyond a certain size, many of the practices would still work but Git becomes awkward. For example, some of the largest software operations in the industry (Google, Facebook) use a “monorepo” with all of the code for all of their projects and nearly all dependencies thereof. A different kind of toolset is needed for such extremely large repositories.

Source code, but few binary assets

Further, we keep small, medium and somewhat large collections of source code in Git; when our work has occasionally involved many large media assets, we have used other means to track them. Git specifically, is probably not a suitable choice for (for example) a large-scale game development effort with hundreds of gigabytes of binary assets.

Skill and understanding needed

Here at Oasis Digital, we are not in the “get a bunch of people to grunt out some code” business. Instead we are in the “recruit and hire good people, and help them become great” business. This is necessary for us, because of the premier customers we serve, and because we not only develop, we also teach. We are all about deep mastery of tools. Our Git approach fits very well with this context.

We’ve sometimes heard the objection to Git, that it is difficult for less skilled developers to understand fully and use correctly. This is possibly true, but it is not that important to our context – and we think it is not that important at all. Anyone with the capability of understanding software enough to develop quickly, efficiently, and with quality, certainly has the capability to master Git.

With great power…

Even with good understanding, Git is still relatively easy to misuse. It offers numerous very powerful commands, it is like a chainsaw and a machine shop. It is not like a set of safety scissors.

We have found that this power punishes developers who don’t look at a graphical representation of the commit tree regularly. It rewards developers who do.

Syntax

Even keeping in mind the power of the Git command vocabulary, it’s CLI syntax leaves much to be desired. It shows clear signs of having evolved awkwardly, and had early accidental complexity retained for backward compatibility.

However, we have found that developers who have the discipline to look at a graphical representation of the commit tree, generally gain a better understanding of the operation they want to perform and are more likely to perform correctly even when using the CLI.

Beware neutered GUIs

Some Git GUI products aim to simplify Git “for the masses”. In the process they managed to do a terrible job of the most essential function, visualizing and understanding the commit tree. Beware of a tool which resists showing you this tree. Even following our practices described here (where master will be a straight line most of the time), it is necessary frequently to understand a bunch of concurrent possibly tangled branches when a project is under heavy many-developer work. The right GUI makes this fast and easy.

Churn

I think the Git community is now on the third major mechanism for subproject use. We avoid subprojects as much as possible, but experience some frustration when we must use it.

Revisionist history

Our approach clearly and intentionally yields a revisionist, streamlines history. As described above, the resulting history is crafted for understanding, and for (rarely) backing out the final set of related changes for some work rather than miscellaneous partial changes. Still, some people are uncomfortable with the revisionism, and prefer a full record of every step along the way. This is a trade-off, where we have found the revisionist approach has more upside than downside.

Discipline needed

Even with the restrictions, it still requires discipline, to avoid creating a branch from a spot in history (something not on master) that will change in the future.